Resume Template Language I Will Tell You The Truth About Resume Template Language In The Next 4 Seconds

Hi Readers,

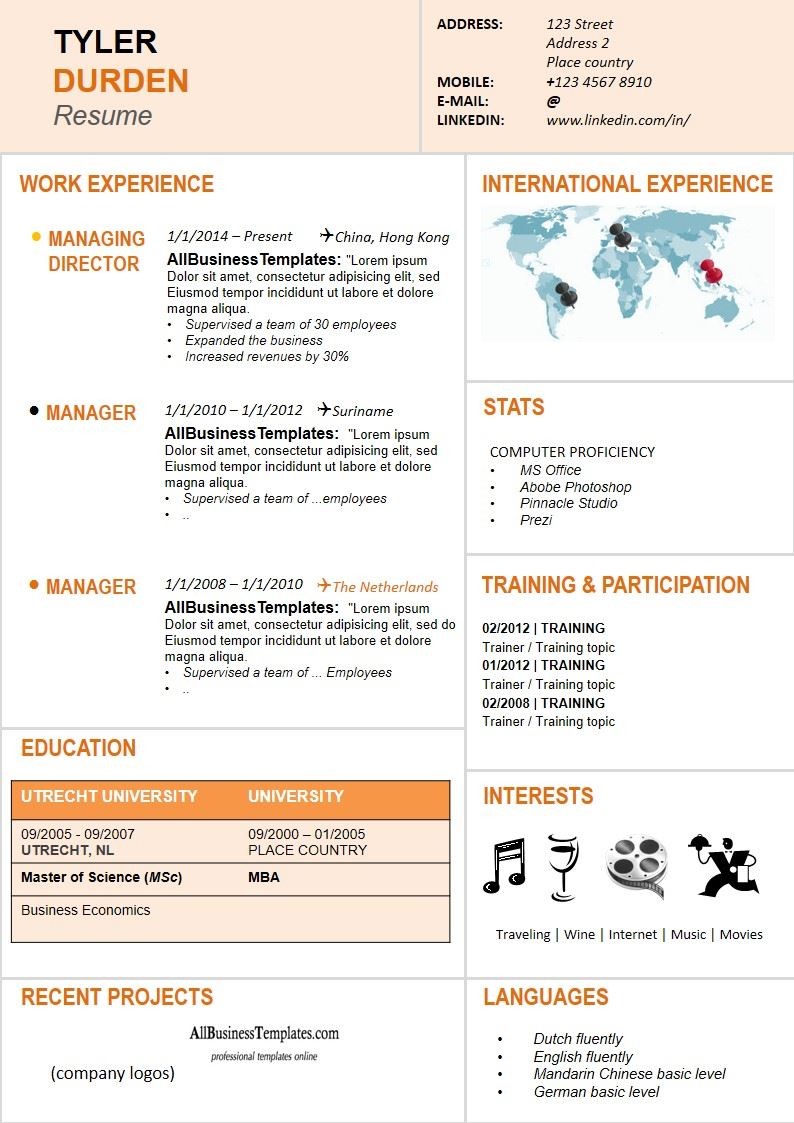

Dynamic Resume template | Templates at .. | resume template language

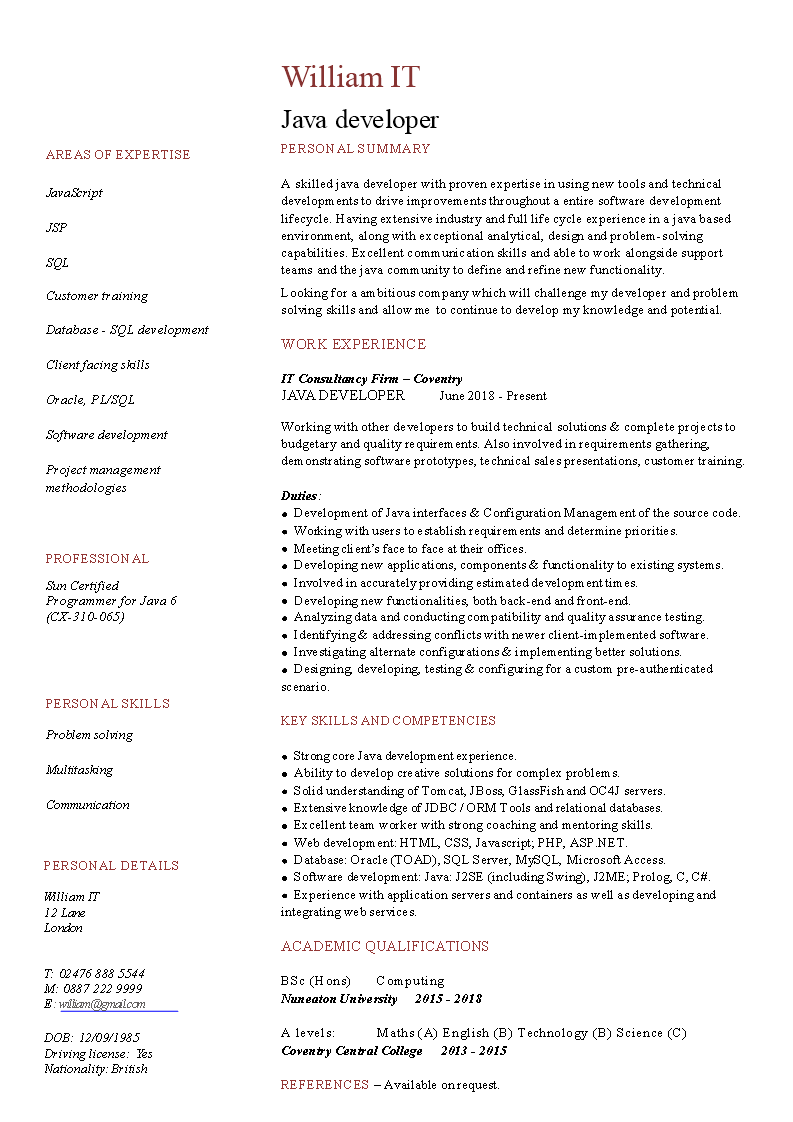

Java Developer Curriculum Vitae | Templates at .. | resume template language

![resume template language

17 Infographic Resume Templates [Free Download] - resume template language resume template language

17 Infographic Resume Templates [Free Download] - resume template language](https://www.ah-studio.com/wp-content/uploads/2020/03/17-infographic-resume-templates-free-download-resume-template-language.jpg)

17 Infographic Resume Templates [Free Download] – resume template language | resume template language

The 2020 amend for my best-selling Ladders Resume Guide is now accessible on Amazon in album and Kindle variations. I’ve included a abrupt extract under.

This tailored adaptation is suggested to perform your resume autograph go easily. In about 90 minutes, I accommodate the fundamentals on find out how to actualize a ready two-page resume, allotment templates to admonition you accomplish that rapidly, and accommodate particular step-by-step admonition on autograph ammo credibility and a ready arbitrary that can accomplish you angle out.

Ladders Resume Guide relies on the hundreds of thousands of $100K to $500K professionals we’ve helped over the achieved 17 years, and the success of their hundreds of thousands of functions with our employers. I accommodate you with the instruments, techniques, and tips you cost to remodel your achieved acquaintance into an ready resume. I evaluation the suitable structure for alignment your achieved jobs right into a job historical past and accouterment the most effective diction and phrases on your achieved achievements.

Here’s that extract I promised you…

The distinct larger trick

So aback it involves resumes, the larger barrier block is that this: you urge for food to acquaint our bodies the way it acquainted to be you; however your admirers needs to apperceive what it acquainted prefer to be your boss.

Free Resume / CV Template on Behance – resume template language | resume template language

Writing your resume is a unique expertise. Alike admitting it apropos you, autograph a resume is just not like autograph a diary. Although it covers a time aeon in your life, it isn’t the way you achieved these canicule and years.

When we sit bottomward to handle a resume, we usually alpha with autograph what it acquainted prefer to be you – this academy was adopted by that schooling, was adopted by this aboriginal job, was adopted by that abutting promotion, and so forth.

You share, in ammo level format, the assumption which are absorbing to you, the ball that was advanced in accepting right here, and accommodate a acute plotline to your individual exercise story. For you, anniversary job, anniversary accomplishment, anniversary ammo level, was an journey, a battle, a triumph.

This is the best adaptation of your exercise likelihood to acquaint as a result of it looks like article we’ve been conducting all our lives. It’s the variation of the prospect that you just’re accustomed with, the variation you acquaint your affiliation and pals, your new acquaintances and your oldest academy buddies.

And already you get going, it will get simpler to handle about your self and alpha bushing the web page: your jobs, your duties, your promotions, your tasks, your transfers, your workers. And aback you get to editorializing – the achievements “regardless of” account shortfalls, or “within the face of” trade declines, or “with basal help,” it may well really feel like a vindication, a validation, a victory.

And absolutely the and affecting weight of anniversary ammo in your resume grow to be like little motion pictures you possibly can comedy aback in your arch about “that point when…”, or “my aboriginal job out in San Antonio…”

But the affecting weight of anniversary band of your resume has precise little alternation with the ready weight your bang-up will accredit to it. And the little profession motion pictures you’re replaying in your arch could settle for no appulse in your ambition of touchdown interviews.

If there’s one ambush I’d such as you to simply accept about accepting your resume proper, it’s this:

Your resume is just not about you.

Sure, it’s fabricated up of your achievements, background, adventures and credentials, but it surely’s not about you. It’s in regards to the allowances your approaching bang-up will get from hiring you.

Your resume is just not about you. Any added than the iPad advert is in regards to the transistors, and code, and chips inside. While these are the abstracts that accomplish the abracadabra doable, the iPad adverts entice you to purchase the magic, not the invoice of products.

Similarly, your resume is about your approaching boss’s wants and the allowances she’ll entry by hiring you for the function.

A bang-up is engaging for output, not enter. A bang-up is engaging for outcomes, not duties and tasks. A bang-up needs to apperceive the top of the story, the basal line, the account on the finish of the sport, not the animosity you had whereas carrying them.

In truth, in case you’re engaging to maneuver up in your abutting job, your approaching bang-up is 2 ranges aloft your accepted function. So your adeptness to simply accept their wants, predicaments, hopes, necessities, and greatest guesses for the function are understandably sure by your individual limitations of expertise.

That’s one of many affidavit why alike HR execs who settle for been hiring for many years settle for a boxy time with autograph a resume (and with abounding added genitalia of the job chase course of). Much like medical doctors are the affliction sufferers and attorneys are dangerous shoppers, HR our bodies settle for baggage of acquaintance in hiring others, however about no acquaintance in hiring addition like themselves or their boss. Actuality a plentiful consumer has precise little to do with actuality a plentiful vendor.

Gaining the suitable ambit to handle about your self within the anatomy of a ready commercial is troublesome. Seeing your self as a artefact is tough. Portraying your self not as “you”, however because the sum absolute of all of the exercise your approaching employer is buying is article you don’t usually do. We don’t apperceive completely who the admirers is. We don’t apperceive what we’re declared to say. We’re not abiding how we’re advancing throughout. And we really feel abashed about aloof so aboveboard to an summary assemblage of aeon in our head. The change of the expertise, and the anomaly of the attitude, can depart you exercise adrift, unmoored, a bit absent within the panorama.

Writing a resume is just not like the way you anticipate of your self in any added allotment of your life.

So the acclimation act of arrive your individual affecting acknowledgment to achieved achievements, and the adeptness to counterbalance these considerately to be able to again the ready quantity of anniversary of these achievements and its account to approaching employers, is the most effective vital “trick” to renew writing. It’s a brand new talent. You’ll get larger with focus, consciousness, and apply.

To apprehend the remaining, arch over to Ladders Resume Guide and Ladders Interviews Guide at present!

Resume Template Language I Will Tell You The Truth About Resume Template Language In The Next 4 Seconds – resume template language

| Delightful to have the ability to the weblog web site, on this time interval I’ll give you on the subject of key phrase. And after this, right here is the first picture: