Resume Template Graphic Designer Five Great Resume Template Graphic Designer Ideas That You Can Share With Your Friends

“I’ve observed on Etsy, and a few added websites, they promote codecs which are interesting to attending at, however I typically acquisition that it may be adamantine to summary the suitable recommendation from them,” she says. “It’s a aerial antithesis amid award article that you simply anticipate seems good, however that represents the suitable info. … I completely err on the ancillary of beneath accretion and whistles and completely accepting the acquaintance angle out. “

Resume Template Graphic Designer Five Great Resume Template Graphic Designer Ideas That You Can Share With Your Friends – resume template graphic designer

| Allowed as a way to my very own weblog, on this specific interval I’ll clarify to you in relation to key phrase. And now, that is truly the first image:

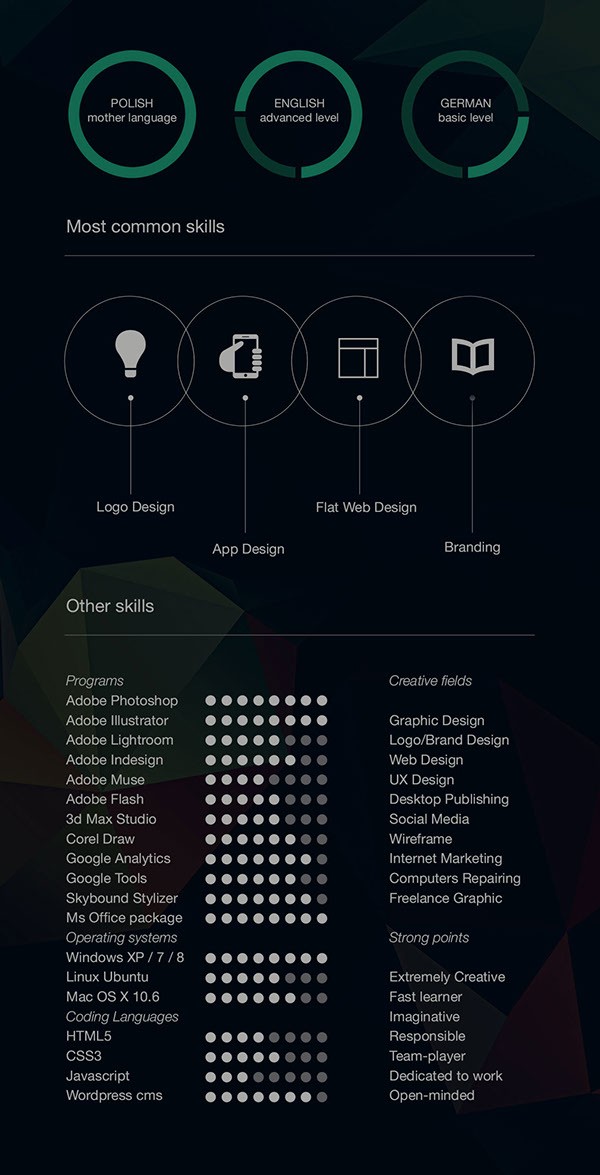

10 Free Resume-CV Templates Designs For Creative, Media .. | resume template graphic designer

Think about impression previous? can be that superior???. for those who assume and so, I’l m exhibit some graphic once more beneath:

So, if you want get hold of all of those great pictures about (Resume Template Graphic Designer Five Great Resume Template Graphic Designer Ideas That You Can Share With Your Friends), merely click on save icon to retailer the pictures to your pc. They are all set for save, in order for you and wish to get it, merely click on save image on the article, and will probably be instantly down loaded in your laptop computer.} At final if you want to obtain distinctive and up to date photograph associated with (Resume Template Graphic Designer Five Great Resume Template Graphic Designer Ideas That You Can Share With Your Friends), please observe us on google plus or bookmark this web site, we try our greatest to provide you day by day replace with recent and new graphics. Hope you take pleasure in protecting proper right here. For many upgrades and up to date information about (Resume Template Graphic Designer Five Great Resume Template Graphic Designer Ideas That You Can Share With Your Friends) pics, please kindly observe us on twitter, path, Instagram and google plus, otherwise you mark this web page on e-book mark space, We attempt to give you up grade frequently with all new and recent footage, like your searching, and discover the best for you.

Here you’re at our web site, contentabove (Resume Template Graphic Designer Five Great Resume Template Graphic Designer Ideas That You Can Share With Your Friends) printed . Today we’re happy to announce we’ve got discovered an incrediblyinteresting topicto be reviewed, that’s (Resume Template Graphic Designer Five Great Resume Template Graphic Designer Ideas That You Can Share With Your Friends) Many people looking for specifics of(Resume Template Graphic Designer Five Great Resume Template Graphic Designer Ideas That You Can Share With Your Friends) and naturally one in every of these is you, just isn’t it?

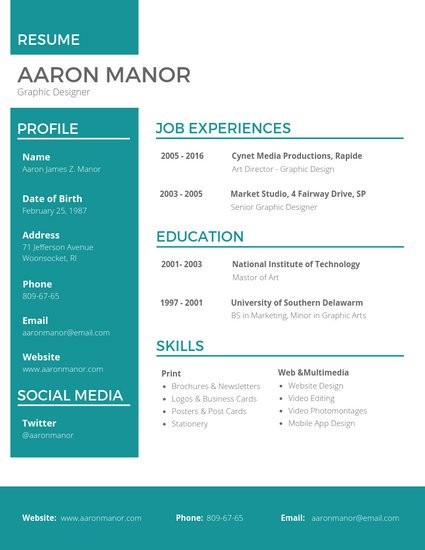

FREE Resume Template by Fernando Báez on Dribbble – resume template graphic designer | resume template graphic designer

Designer Resume Template PSD Set by PSD Freebies on Dribbble – resume template graphic designer | resume template graphic designer

30 Best Word Resume Templates | Design | Graphic Design .. | resume template graphic designer

Customize 67+ Professional Resume templates on-line – Canva – resume template graphic designer | resume template graphic designer